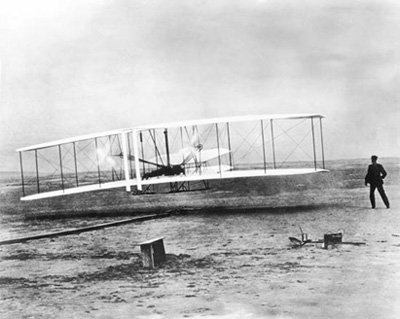

The Wright Flyer looks light and insubstantial in images, but on September 17, 1908, the world-changing machine nearly killed its inventor. A propeller snapped as Orville was demonstrating an improved version of the Flyer, causing a crash that broke four of his ribs. In that moment the physical, technical and historical weight of the invention is made starkly clear.

In the modern day, innovation is associated with ‘small’, and ‘nimble’. Whether it’s an organization, a technology, or even a philosophy, the term ‘big’ conjures up images of gas guzzling SUVs and bloated bureaucracies. But as the Wright brothers showed us, world changing innovation requires an acceptance of ‘big’. It requires big ideas, like experimenting with gliders in an age when experts suggested that powered flight may not happen until the 21st century. It requires big risks, like continuing on after one of the legends of the field is himself killed in an accident. Most of all, it requires big hardware. The Wright Flier weighed many hundreds of pounds, and simply getting it to test sites presented an enormous logistical challenge to their experimentation.

Big hardware does not get talked about often enough in the narrative around innovation. Simply put, going to where nobody has ever gone before requires tools that nobody has ever used before. Putting a man on the moon also required some serious hardware – it’s not the sort of thing where you can go halfway and then search for more funding. It is the exact same story whether diving into the structure of DNA, or bringing America into the future with a Gigafactory.

Going to where nobody has ever gone before requires tools that nobody has ever used before

And it’s certainly the same story in computing. Bringing consumers into virtual reality will require computing power unlike any that we have seen before. The Internet of Things, where the conversation tends to focus on the little “things”, will be enabled by a massive infrastructure to build the communication links that turns the disparate devices into it connected cloud.

And those are just the innovations that are already in motion. Imagine what could come next. Leveraging big data coming off of mobile technology to automate diagnoses? Harnessing of scientific breakthroughs in biology to synthesize new life? All of that will require an enormous amount of simulation and automation. Human-level artificial intelligence will require even more. Numbers vary for how to describe the power of the human brain in computing terms, but all of them require mind-boggling amounts of processing power. And, like an early horseless carriage, prototype AI will not be sleekly optimized, so getting even preliminary results will require extensive computational horsepower.

And those are just the innovations that are already in motion. Imagine what could come next. Leveraging big data coming off of mobile technology to automate diagnoses? Harnessing of scientific breakthroughs in biology to synthesize new life? All of that will require an enormous amount of simulation and automation. Human-level artificial intelligence will require even more. Numbers vary for how to describe the power of the human brain in computing terms, but all of them require mind-boggling amounts of processing power. And, like an early horseless carriage, prototype AI will not be sleekly optimized, so getting even preliminary results will require extensive computational horsepower.

Mainframes are clearly the field of computing best poised to meet these challenges, allowing organizations to deploy the necessary computing power quickly and effectively. It’s frustrating to realize that the mainframe technology that enables tomorrow’s world changing innovation will probably not be properly celebrated by history. Does anyone remember Charlie Taylor? If you want to be celebrated, win an Emmy. But if you want to be part of solving humanity’s hardest problems, developing big, risky, innovative hardware is the place to be.

Regular Planet Mainframe Blog Contributor

Matt Ritter writes about business intelligence, data science, and whatever else he can get numbers about at Preinvented Wheel.